Sign2Sign – A First Attempt

Author:

Titus Weng ’26Co-Authors:

Faculty Mentor(s):

SingChun Lee, Computer ScienceFunding Source:

Gary & Sandy Sojka Fund for Research, Teaching & Scholarship in Developmental Disabilities, Neuroscience & Human Health Fund.Abstract

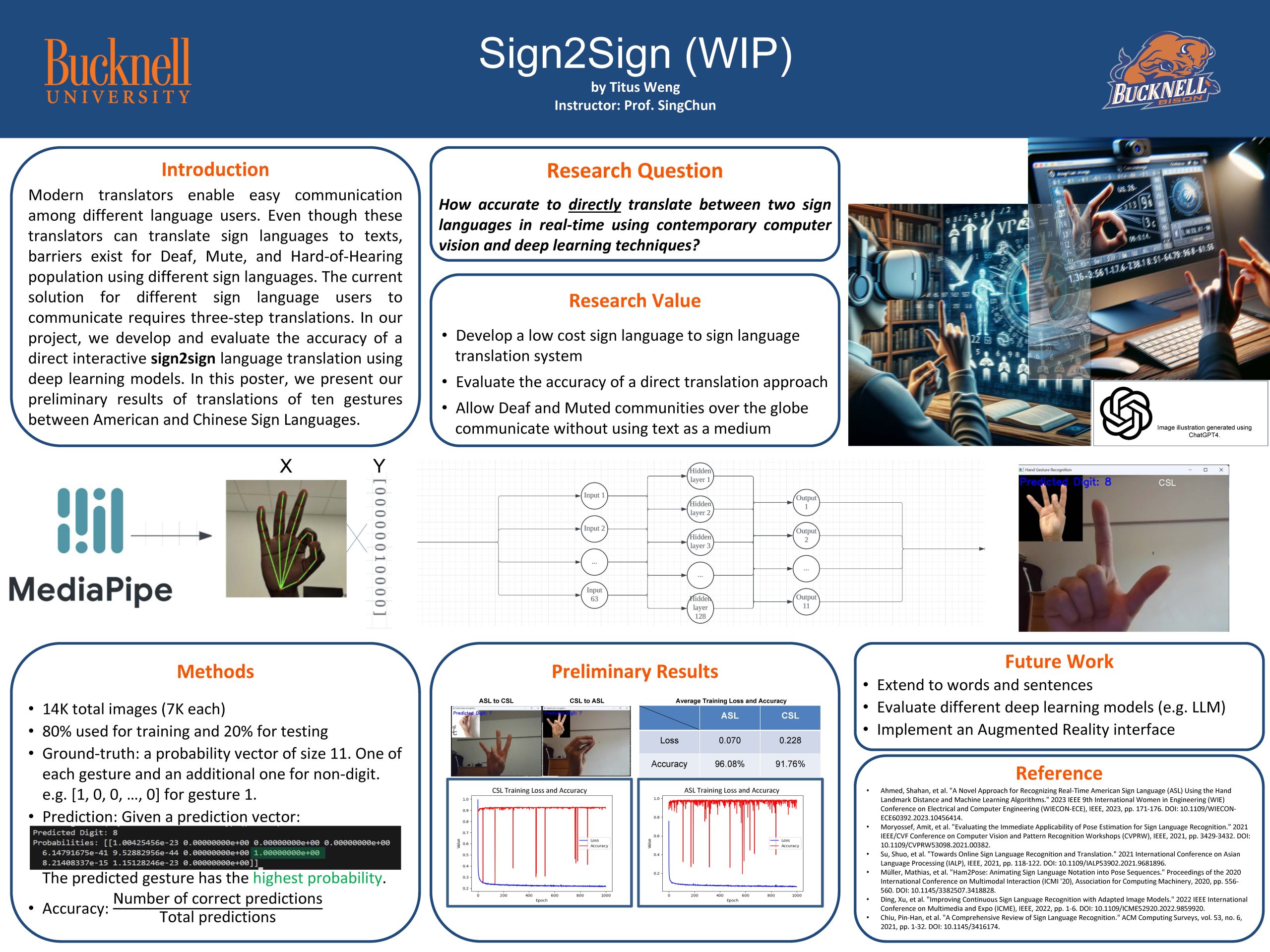

At the core of human engagement is communication. While technological advances enable convenient translation for different language speakers to communicate, millions of Deaf, Mute, and Hard-of-Hearing people still face immense hurdles due to the lack of accessible tools to facilitate direct sign language translation. Our project aims to build a Sign2Sign direct immersive translation tool using WebXR that takes input from any accessible camera and produces output in WebXR-supported platforms. This paper presents the preliminary results of direct translation between ten gestures of American and Chinese Sign Languages.